Algorithmic Profiling and Automated Decision-Making in Criminal Justice

Max Planck Fellow Group

The research group “Algorithmic Profiling and Automated Decision-Making in Criminal Justice” is dedicated to legal issues that arise from the use of artificial intelligence (AI) in the areas of crime detection, prosecution, and sentencing of criminal offenses. Its aim is to examine whether traditional criminal law doctrine and existing criminal law practice provide convincing answers to the questions posed by the use of AI systems; where this is not the case, innovative solutions will be developed. The various projects make use of legal methodology, comparative legal analysis, and computer science.

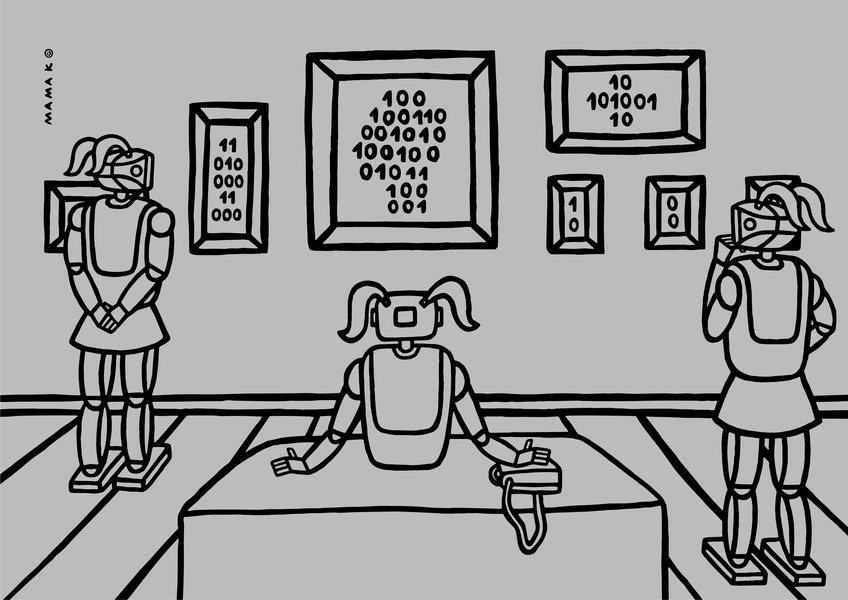

Graph: © Mamak

Research Topics

The research group is open to research projects on all issues that arise from the use of AI in the areas of criminal law and criminal procedure. This includes investigations into criminal responsibility when AI is used as a new actor as well as questions about the potentialities and limits of automated applications of law in the area of criminal justice, such as algorithmic sentencing and new regulatory and supervisory models for the use of AI in criminal justice systems.

Projects

Head of project: Lea Bachmann

With the advent of artificial intelligence (AI) systems, new players are entering the corporate arena. While they offer many advantages, they also pose new risks. Using the example of AI systems for anti-money laundering, this doctoral project will analyze the potential…

more

Head of project: Colin Carter

Large language models are advanced, deep learning algorithms designed to understand, summarize, translate, predict, and generate text. They are trained on large datasets, which enables them to mimic human-like language abilities. Recently, these models have gained…

more

Head of project: Laura D’Amico

As the century proceeds, artificial intelligence (AI) will become increasingly present, not only in our daily lives but also in courtrooms. Using both theoretical and practical approaches, the aims of this research project are, on the one hand, to analyze who might…

more

Head of project: Linus Ensel

The focus of this research project lies on the potential advantages of a partial rationalization of the sentencing process. This kind of intervention in the existing system would lead to a reduction of judicial discretion and would raise the question of the role human…

more

Head of project: Sabine Gless

Are programmers the new lawmakers, as Joseph Weizenbaum insinuated back in the last century? And will they remake the criminal justice universe? Certainly, AI systems will continue to replace human decision-making at various points within the criminal justice system…

more

Head of project: Elina Nerantzi

Can a harmful artificial intelligence (AI) agent be held directly criminally responsible? This project seeks a new way to address this recurring question. Instead of trying to ascertain whether AI agents could ever be our moral duplicates, responsive as we are to…

more